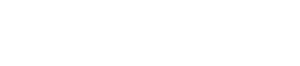

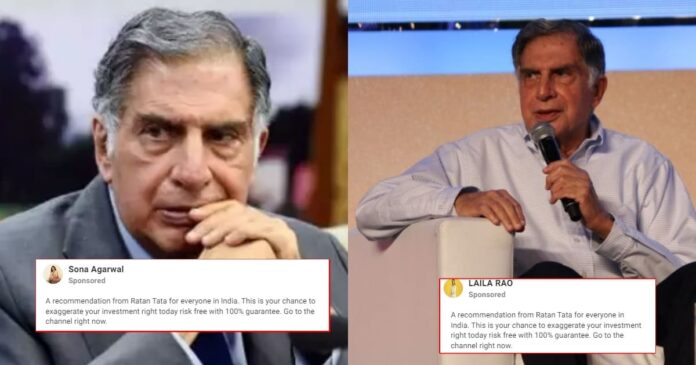

In a concerning development, Ratan Tata, the esteemed industrialist and ex-chairman of the Tata Group, became embroiled in a deceitful deepfake video circulated on Instagram. Shared by an Instagram user named Sona Agarwal, the misleading content depicted a fabricated scenario in which Tata appeared to offer investment advice, enticing viewers with purportedly “risk-free” investment opportunities. The video portrayed Tata addressing Agarwal as his manager, introducing an element of deception to the misrepresented storyline.

The deceptive Instagram content showcased a deepfake interview of Ratan Tata, where he addressed Agarwal as his manager. The accompanying caption on Agarwal’s Instagram post stated, “A recommendation from Ratan Tata for everyone in India. This is your chance to amplify your investment today risk-free with a 100% guarantee. Go to the channel right now.”

Deepfake Video of Ratan Tata

The fabricated video depicted Tata addressing Agrawal as his manager. “A recommendation from Ratan Tata for everyone in India. This is your chance to exaggerate your investment right today risk-free with 100% guarantee,” the caption of the video post read. “Go to the channel right now.”

The video also displayed messages of individuals receiving money in their bank accounts. Just a month ago, deepfake technology was once again employed to target Ratan Tata, as numerous videos and WhatsApp forwards circulated. Asserting that he had committed a specific sum of money to Afghanistan cricketer Rashid Khan.

He used X to clarify that he never provided any suggestions to the International Cricket Council (ICC) concerning ‘fines or rewards’ for cricketers. Additionally, he refuted any ‘connection to cricket whatsoever.’

Reaction by Ratan Tata

Reacting to this deceptive portrayal, Tata firmly stated that he never provided any recommendations to the International Cricket Council (ICC) regarding fines or rewards for cricketers. He emphasised his complete lack of involvement in cricket-related associations.

I have made no suggestions to the ICC or any cricket faculty about any cricket member regarding a fine or reward to any players.

I have no connection to cricket whatsoever

Please do not believe WhatsApp forwards and videos of such nature unless they come from my official…

— Ratan N. Tata (@RNTata2000) October 30, 2023

The unsettling rise of deepfake technology is not an isolated incident. But rather part of a concerning trend where prominent figures become targets of falsified digital content. Actress Priyanka Chopra recently experienced a similar situation when a manipulated video surfaced. Depicting her endorsing a brand and allegedly revealing her annual income. Unlike some other deepfake cases, Priyanka’s face remained unaltered, but her voice and the context of the original video were manipulated. Raising doubts about the authenticity of digital content.

Deepfake video a havoc?

In another alarming incident, a deepfake video purportedly featuring actress Alia Bhatt emerged. The manipulated footage showcased Bhatt’s face superimposed onto another woman. Creating a misleading impression of her involvement in an entirely unrelated scenario. These instances emphasise the growing concerns surrounding the widespread use of deepfake technology, posing a risk of deceiving unsuspecting audiences.

Responding to this urgent issue, Union Minister Rajeev Chandrasekhar took proactive steps by engaging in discussions with social media platforms. His objective was clear: to evaluate the progress made by these platforms in combating misinformation and deepfakes. Chandrasekhar emphasised the imminent issuance of advisories within the next two days. Highlighting the necessity for 100% compliance from these platforms. To curb the dissemination of deceptive content.

The troubling proliferation of deepfake videos targeting esteemed personalities. Underscores the pressing need for stringent measures to counter this escalating threat to online credibility and authenticity. As technology evolves, the fight against misinformation becomes increasingly crucial. Necessitating collaborative efforts to uphold the integrity of digital content and shield individuals from fraudulent portrayals.

Read more: 5 Bollywood actresses who fell prey to deepfake Video