A deepfake video featuring actress Priyanka Chopra has circulated widely online. In this manipulated video, Priyanka Chopra ‘s facial appearance remains unaltered, unlike in other instances. However, her voice undergoes manipulation. Replacing the authentic audio with a fabricated brand endorsement. In this deepfake content, she goes on to disclose her yearly income while promoting the mentioned brand.

Priyanka Chopra talking to Beer Biceps in Deepfake video

The creator manipulated the audio using clips from YouTuber Ranveer Allahbadia, known as Beer Biceps. Who substitutes her statements for a fabricated endorsement. In the altered video, she introduces herself as Priyanka Chopra. Claiming to engage in acting, modelling, and singing, while stating she earned 10,000 Lakh in 2023.

View this post on Instagram

She goes on to mention investments in various projects beyond her work in movies and music. Towards the end, she promotes a friend’s project. Suggesting potential earnings of 300,000 rupees weekly through advice shared on Telegram using her name. As of now, Priyanka has not issued a response to this manipulated audio.

Victims other than Priyanka Chopra

By leveraging deepfake technology, individuals can manipulate videos to make it appear as if someone is saying or doing things they never did in reality. Malicious actors can exploit this tool, posing a risk. To disseminate misinformation and damage the reputation of unsuspecting individuals.

I feel really hurt to share this and have to talk about the deepfake video of me being spread online.

Something like this is honestly, extremely scary not only for me, but also for each one of us who today is vulnerable to so much harm because of how technology is being misused.…

— Rashmika Mandanna (@iamRashmika) November 6, 2023

Several renowned celebrities, such as Alia Bhatt, Katrina Kaif, Kajol, and Rashmika Mandanna, have fallen prey to this technology. In a recent manipulated video featuring Alia Bhatt, the face of another woman was altered to resemble Alia’s. The video depicted her sitting on a bed in a strappy dress and making inappropriate gestures towards the camera.

Also read: 5 Bollywood actresses who fell prey to deepfake Video

In response to the escalating deepfake threat, the Indian government has taken notice, prompting Union Minister Rajeev Chandrasekhar to engage in discussions with a social media platform. During the meeting, he assessed the platforms’ advancements in addressing misinformation and deepfakes. Chandrasekhar further declared that the government would soon release advisories for social media platforms, emphasising the need for complete compliance.

Union Minister Rajeev Chandrasekhar tweet

In a post on X, the minister wrote, “A new amended IT Rules to further ensure compliance of platforms, and safety & trust of Digital Nagriks is actively under consideration”.

Held the 2nd #DigitalIndiaDialogues on Misinformation and #Deepfakes with intermediaries today, to review the progress made since the Nov 24 meeting.

Many platforms are responding to the decisions taken last month and advisories on ensuring 100% compliance will be issued in… pic.twitter.com/fEiTTMUGQU

— Rajeev Chandrasekhar 🇮🇳 (@Rajeev_GoI) December 5, 2023

PTI reported that the majority of social media platforms have adhered to the rules, while those progressing at a slower pace are granted additional time. The government has explicitly stated its commitment to maintaining a “zero tolerance policy” regarding user harm resulting from misinformation and deepfakes. It has been disclosed that a section under CRPC enables the prosecution of deepfakes on grounds of “forgery.”

According to an insider speaking to PTI, “We will assess whether advisories alone are sufficient or if we need to introduce new amended rules within the next seven days. If required, we will implement a more stringent set of rules, emphasising enforcement and deterring those who misuse the platforms.”

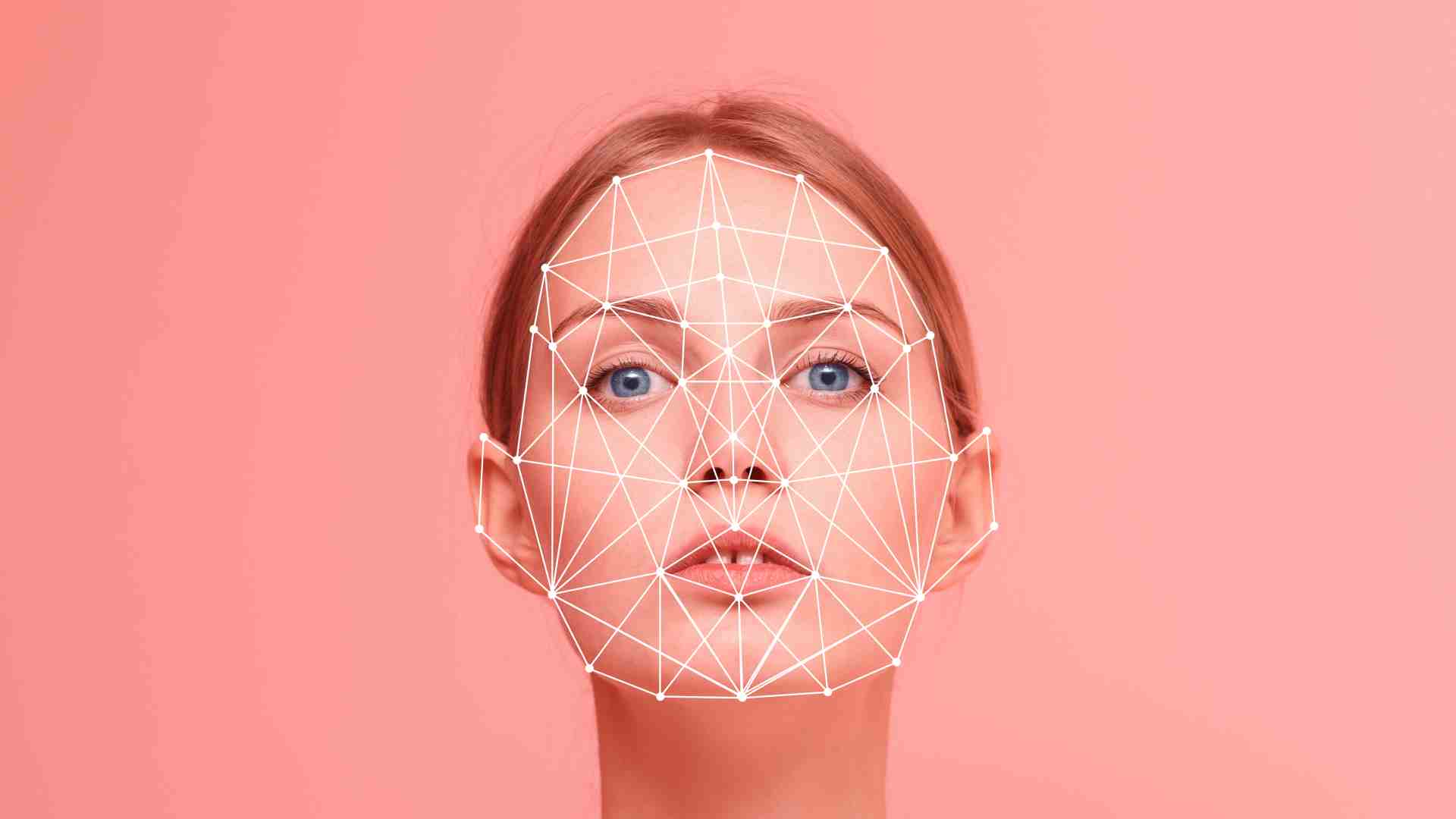

What is a Deepfake video??

The term “deepfake” is a fusion of “deep learning” and “fake,” denoting videos that undergo alterations through algorithms to substitute the original person with another, often a public figure, in a manner that convincingly mimics authenticity. Deepfakes employ a type of artificial intelligence known as deep learning to create fabricated visuals of events that never occurred. The prevalence of deepfake videos has surged with the proliferation of various AI tools. Some of these AI tools are accessible at no cost, further compounding the issue of counterfeit photos, videos, and audio recordings.

Read more: Rashmika Mandanna reacts to her deepfake Viral Video